Setup

Set your MCPJam API key as an environment variable:EvalTest and EvalSuite will auto-save results when this key is available.

MCPJAM_API_KEY enables result uploads.

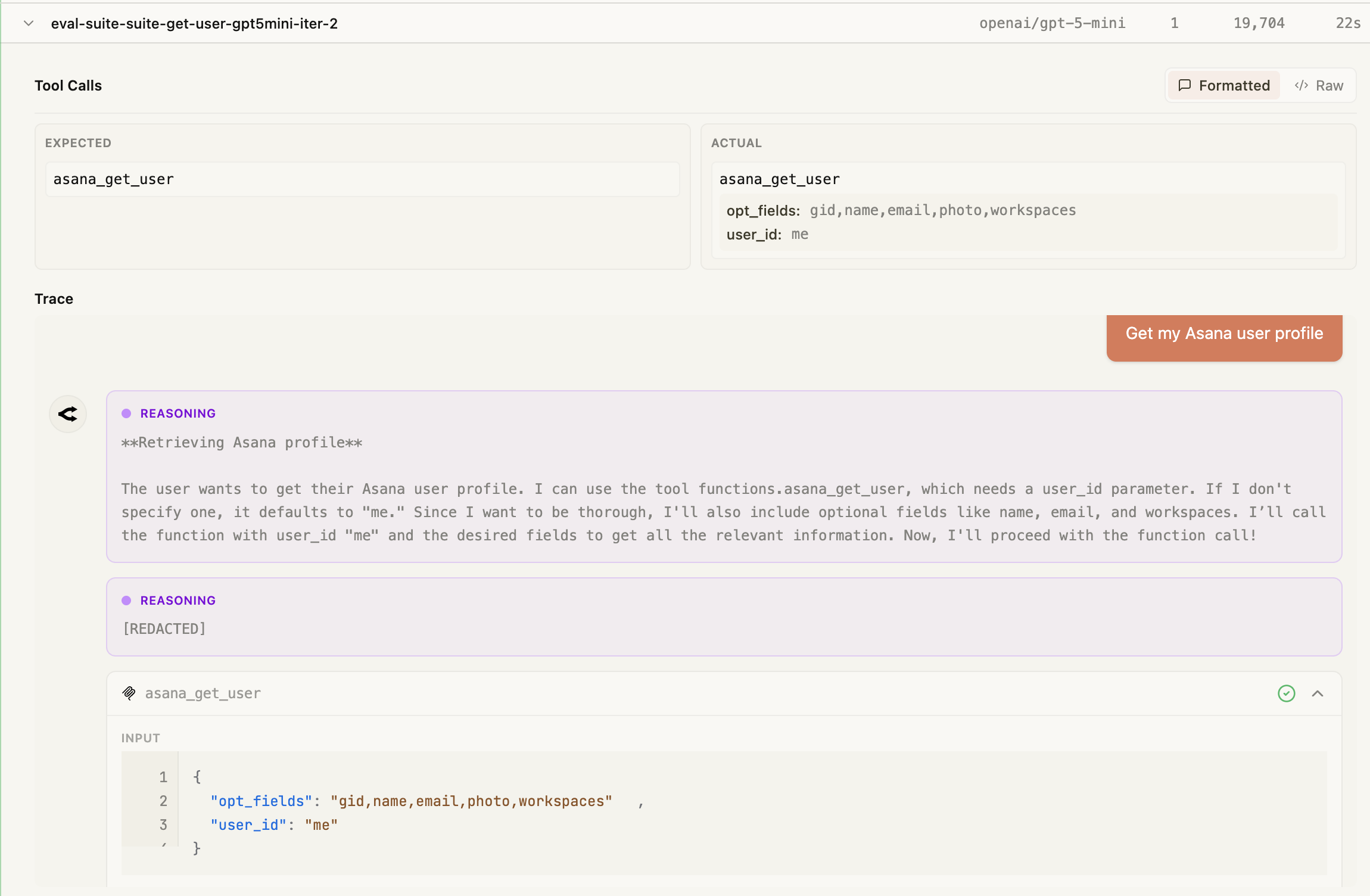

Attach the connected MCPClientManager to TestAgent (or pass agent / mcpClientManager to manual reporting APIs) when you need either of the following:

- MCP App / widget replay in Evals traces — After each MCP App tool call, the agent uses the manager’s

readResourceto fetch HTML from the tool’sui.resourceUriand fillswidgetSnapshotsonPromptResult. Without the manager, traces still upload (messages + spans) but widgets will not replay in the dashboard. The tool’s JSON result alone is not enough for offline iframe replay. - Replay credentials (authenticated HTTP MCP) — The SDK can persist server connection details for debugging and reruns. You do not need to build a second secret object yourself; replay config is derived automatically when the agent or manager is attached.

Auto-Save from EvalTest

WhenMCPJAM_API_KEY is set, EvalTest.run() automatically saves results:

passed: false unless you set failOnToolError: false on the mcpjam object.

For authenticated HTTP servers:

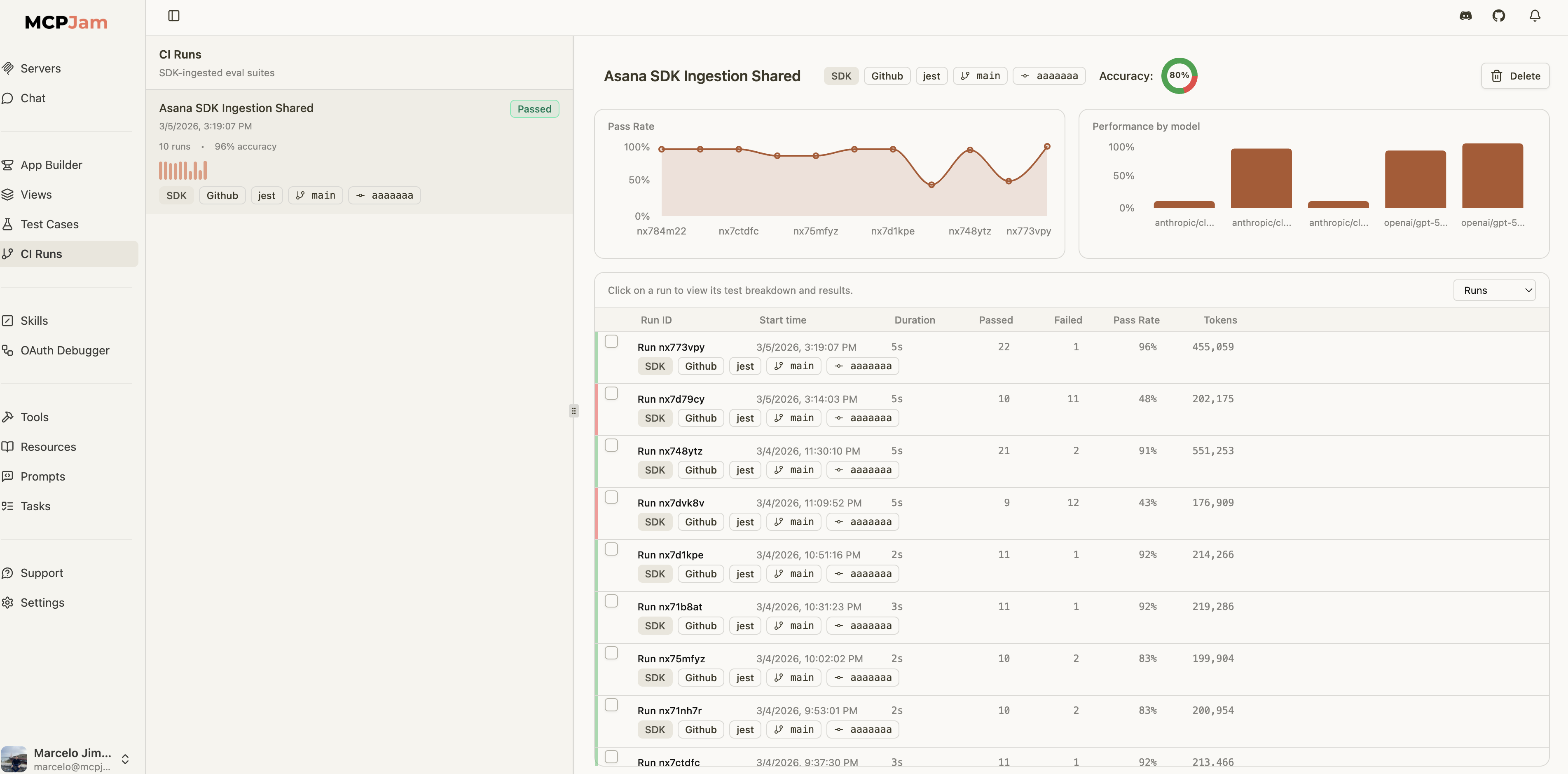

Auto-Save from EvalSuite

Suites can be configured at construction or run time:EvalTest auto-saves are suppressed to avoid duplicate uploads. The suite consolidates all test results into a single run.

Manual Save APIs

For more control — custom test runners, CI post-steps, or framework-agnostic flows — the SDK provides dedicated APIs:reportEvalResults() and createEvalRunReporter() resolve replay credentials in this order:

serverReplayConfigsif you pass it explicitlyagent.getServerReplayConfigs()mcpClientManager.getServerReplayConfigs()

agent or mcpClientManager and let the SDK derive replay credentials automatically. Use serverReplayConfigs only as an advanced override.

When replay configs are inferred from agent or mcpClientManager, the SDK limits them to the serverNames you attach to the run when serverNames is provided.

Manual reporters (Vitest/Jest hooks): Pass agent or mcpClientManager into createEvalRunReporter — not only on TestAgent — and call await reporter.finalize() before await manager.disconnectAllServers() so replay config is still available at upload time. See Replay metadata for the MCPJam UI.

CI Metadata

Attach CI/CD context to your eval runs for traceability in the dashboard:

Next Steps

Running Evals

Learn about EvalTest, EvalSuite, and iteration strategies

Saving Results Reference

Full API reference for all saving and reporting methods